|

Therefore, a key technique to understanding operators is a change of coordinates-in the language of operators, an integral transform-which changes the basis to an eigenbasis of eigenfunctions: which makes the equation separable. In operator theory, particularly the study of PDEs, operators are particularly easy to understand and PDEs easy to solve if the operator is diagonal with respect to the basis with which one is working this corresponds to a separable partial differential equation. Furthermore, the singular value decomposition implies that for any matrix A, there exist unitary matrices U and V such that U ∗AV is diagonal with positive entries. The spectral theorem says that every normal matrix is unitarily similar to a diagonal matrix (if AA ∗ = A ∗ A then there exists a unitary matrix U such that UAU ∗ is diagonal). Over the field of real or complex numbers, more is true. Such matrices are said to be diagonalizable. In fact, a given n-by- n matrix A is similar to a diagonal matrix (meaning that there is a matrix X such that X −1 AX is diagonal) if and only if it has n linearly independent eigenvectors. Because of the simple description of the matrix operation and eigenvalues/eigenvectors given above, it is typically desirable to represent a given matrix or linear map by a diagonal matrix. The square of a 2×2 matrix with zero trace is always diagonal.ĭiagonal matrices occur in many areas of linear algebra.The identity matrix I n and zero matrix are diagonal.A matrix is diagonal if and only if it is both upper- and lower-triangular.A matrix is diagonal if and only if it is triangular and normal.

The adjugate of a diagonal matrix is again diagonal., λ n with associated eigenvectors of e 1.

In other words, the eigenvalues of diag( λ 1. The resulting equation is known as eigenvalue equation and used to derive the characteristic polynomial and, further, eigenvalues and eigenvectors. Elements of the main diagonal can either be zero or nonzero.

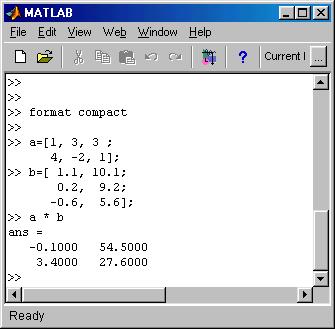

In linear algebra, a diagonal matrix is a matrix in which the entries outside the main diagonal are all zero the term usually refers to square matrices. The matrix analysis functions det, rcond, hess, and expm also show significant increase in speed on large double-precision arrays.Matrix whose only nonzero elements are on its main diagonal The matrix multiply (X*Y) and matrix power (X^p) operators show significant increase in speed on large double-precision arrays (on order of 10,000 elements). As a general rule, complicated functions speed up more than simple functions. The operation is not memory-bound processing time is not dominated by memory access time. For example, most functions speed up only when the array contains several thousand elements or more. The data size is large enough so that any advantages of concurrent execution outweigh the time required to partition the data and manage separate execution threads. They should require few sequential operations. These sections must be able to execute with little communication between processes.

The function performs operations that easily partition into sections that execute concurrently.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed